Algorithm GenESeSS: Abductive Learning Of Quantized Stochastic Processes

- I. Chattopadhyay and H. Lipson,

"Abductive learning of quantized stochastic processes with probabilistic finite automata", Philosophical Transactions of The Royal Society A, Vol. 371(1984), Feb 2013, pp 20110543

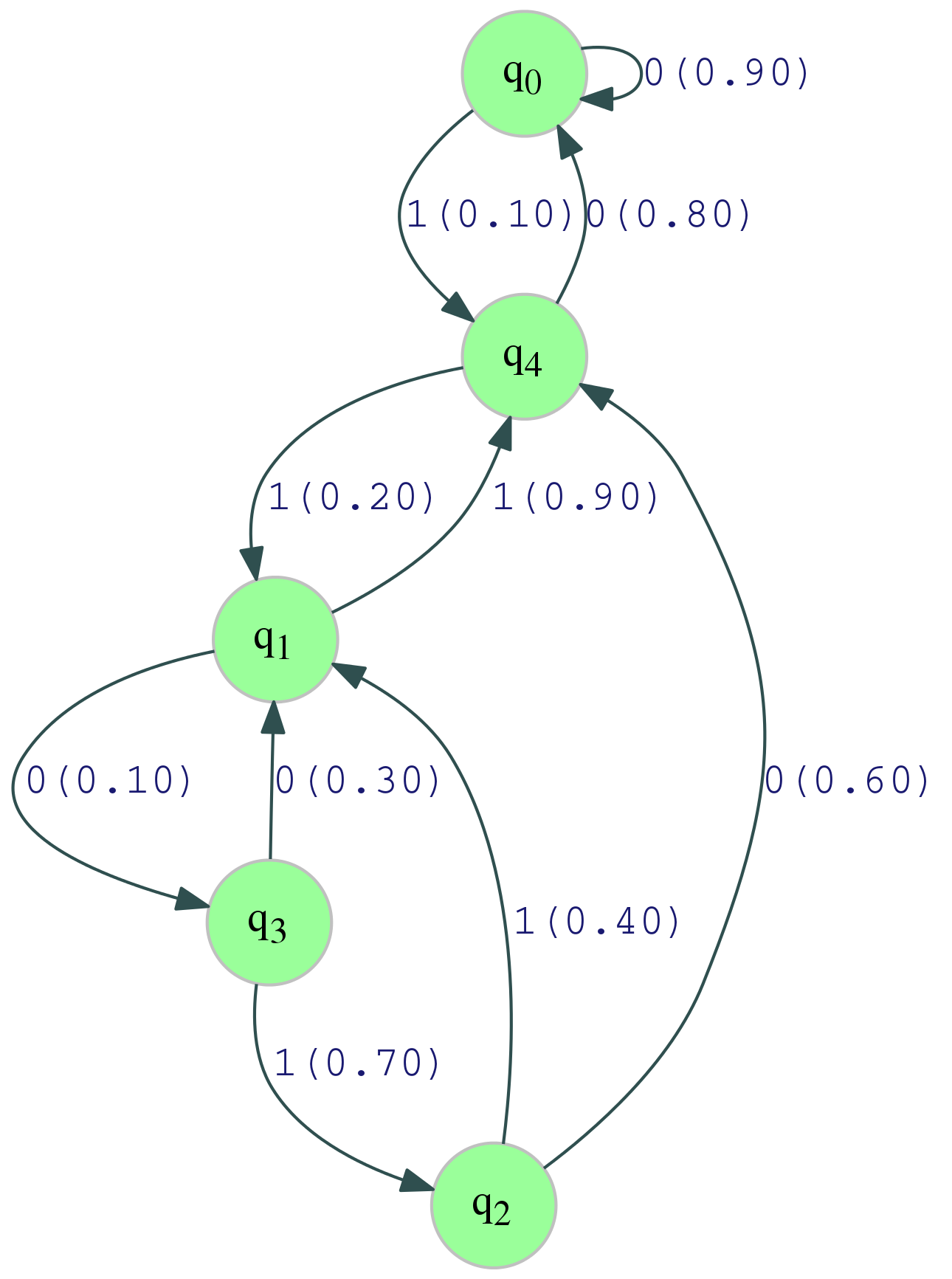

I am interested in the automated inference of Quantized Stochastic Processes (QSP): stochastic dynamical systems that evolve over discrete time, and yield sample paths, which are sequences over a pre-specified symbolic alphabet. Under the assumptions of stationarity and ergodicity, any such quantized process may be generated by a probabilistic automata. A probabilistic automata, syntactically, is a directed graph whose edges are labeled with alphabet symbols, and the associated transition probabilities. A probabilistic automata with a finite number of nodes (in its minimal description) is a Probabilistic Finite State Automata (PFSA). For example, in the figure below, the directed graph with labeled edges (the label 0|0.7 implies that the symbol associated with this edge is 0, which transpires with probability 0.7 from the base state q1) is an example of a PFSA, which generates the sample path shown on right.

For a dense introduction to probabilistic automata used in this analysis (which is a small fraction of the literature), take a look here.

While it is easy to see that PFSA generate ergodic and stationary QSPs, the inverse problem of inferring such models from data is significantly more difficult. Algorithm genESeSS , which is an acronym for generator Extraction From Self-Simialr Semantics , is designed to accomplish this; and provably identifies any stationary ergodic QSP with finite number of causal states.

INPUT DATA:

000000000000000000100010000000000010010001110000001010000000000000001100100010000000000000000000000000 000100010010000000000000000000000000000000000000000000000000001000000000000000000000000001000100000000 000000001000000111000000000011100100000000100000001001000000000000000000000000000000000000000000000000 000000100000000000000100110010000000000000000000000001000000000001000000000000100000000111101110000011 100000010000000000000000000000000000010000000001000010000101000000001000000000100100000000000100101110 000010000000000000000000000000010000011100000000000000000111001110000001000000100000000000000000000100 001000000000000000010100000000001000000010001000000011100000000000000010000000000000000000010100000000 000000000000000000000111110000000000000000010000000000000000000000000000000100000000001000000001000010 000000000000010000010000000000000000001000000001000000000100000000000000000000001000100100000000101110 000010001000000000000000000000000000000000000000000000000000000100001000001110000000010000000000000000 000000000000000000000001000000000000000000000100000100000000000100000000000000000000000000001001000001 111100001000000000000111000000000000000000000000000001000000000000000000000000001000000001000000100100 000000000001111111001000000000000000001100100000000111000000001001110000000000000000000000000000000001 000000000000000000000011100000010000000010001000000000000000000000010000010010000000000001110000000000

INFERRED MODEL:

This inference problem is a case of abduction . The choice of the particular hypothesis class (PFSAs) subsumes the basic principle of how we perceive our systems of interest to generate the observed traces; namely at any given instant a QSP is assumed to be in a unique hidden causal state, which has a well defined probability of generating the next observed symbol. Thus, assumption of the PFSA framework fixes the rule by which the sequential observations are being generated, and we seek the correct hypothesis, i.e., the correct probabilistic automata, that explains the observed symbolic trace. The problem of inferring the hypothesis, given the observation sequence and the generating rule is known as abduction, as opposed to induction, which strives to infer the generating rules from a knowledge of the hypothesis, and the observation set. For a rigorous overview of abductive reasoning, refer to G. Paul's paper .

Algorithm genESeSS is applicable to systems of interest that are termination-free, have no dependence on initial conditions, and are not necessarily restricted to have short memory. The probabilistic automata it infers have no initial and terminal states, and are not restricted to any particular class of graph structures. In contrast to reported techniques, genESeSS can capture behaviors that exhibit long range dependencies; thus significantly expanding the space of inferable processes. genESeSS is based on a measure-theoretic framework that induces probabilistic generators from a QSP, and shows that the class of ergodic, stationary QSPs, with finite number of causal states, is indeed PAC-learnable.

Ckeck out algorithm genESeSS here for the published paper. A presentation on the algorithm can be found here. Drop me an email if you wish to try genESeSS on your own data.